An oft-cited challenge associated with online learning is that facilitators can’t ‘read the room’ in the same way as face to face teaching. They don’t get the same immediate feedback from learners about whether the learners are getting it or not. But, we’ve got an ace up our sleeves – The Class Console and the learning analytics and data within it.

With the Class Console, facilitators can assess overall learning performances. They can determine how well students are learning and what particular difficulties they might be having. This gives facilitators insight into which learners might be at-risk or need support, allowing them to step in and give timely feedback.

In the rest of this post, we’ll be looking at how our facilitator, Kyle, could use the Class Console to support engagement. Let’s find out a little more about Kyle, his course, and his learners.

Kyle’s course

Kyle is running a course on Bike maintenance and repairs. Here’s an outline of his course:

We can see that Kyle has a few welcome activities set for the first week. Then learners get into the crux of the course in week 2-4 and complete a few low-stakes practice (non-assessed), automarked tasks. At the end of week 4 learners have a key practice task which scaffolds them towards their assignment which is due in week 7.

Let’s take a look at how Kyle will be using the Class Console across the course.

Looking for access

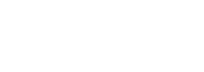

In the first week or so, we want to make sure that all learners are able to log in and get started in the course. So, how do we spot those learners that might need a bit of extra help or nudging?

If we sort our learner list by Last session in ascending order, we can see which learners are yet to log in.

Here we can see that Lisa and Gabby are yet to log in, so Kyle can flick out a quick email to them with instructions on how to log in.

Looking for engagement

Over the next few weeks Kyle will be more interested in whether learners are actually engaging with the course content.

In Kyle’s course, ‘engaging’ means that learners:

- are logging in at least semi-regularly

- have contributed to the welcome discussions

- have attempted a few of the automarked tasks.

For this scenario, Kyle can take a systematic approach and scan the pods for each learner in his learner list. Let’s take a look at a few examples.

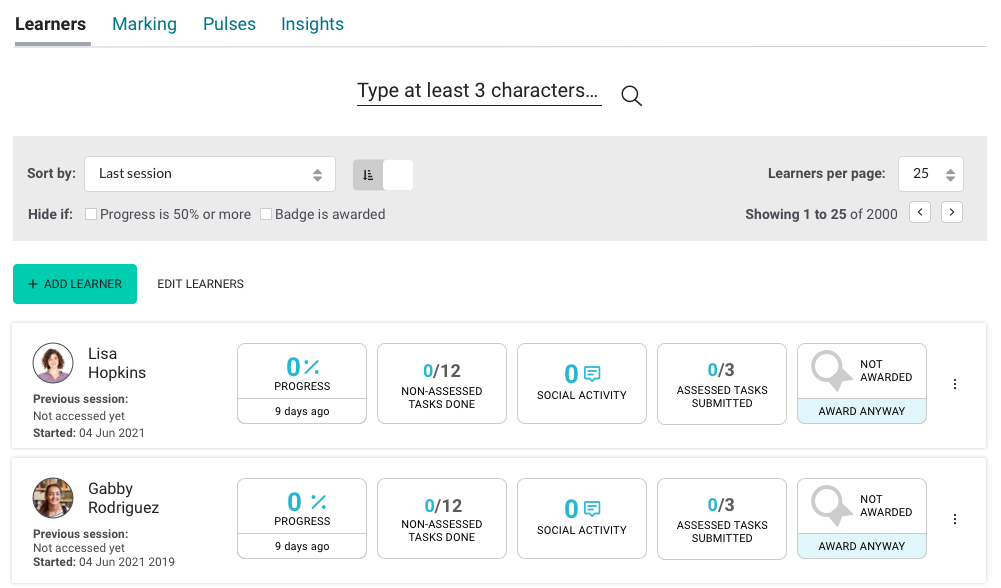

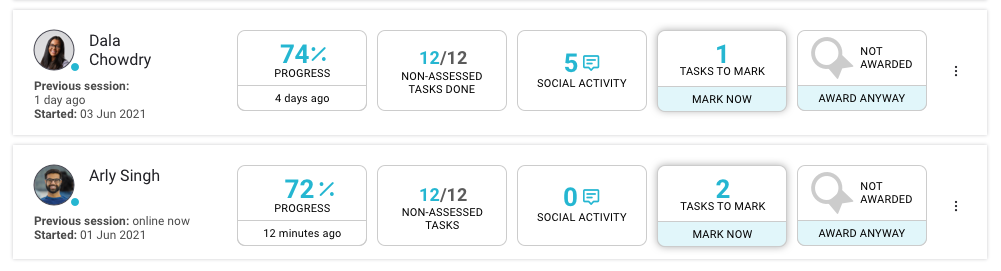

Arly's engagement

Taking a look at the data for Arly, we can see that he hasn’t been contributing to the welcome discussions, though he has been regularly logging in and completing the practice tasks. He’s made good progress.

Kyle might decide to check in on Arly and have an informal conversation about why he values the discussions – they promote connectedness which can support achievement – very useful in online learning. You can see more on this idea in one of our previous posts: Building an online community.

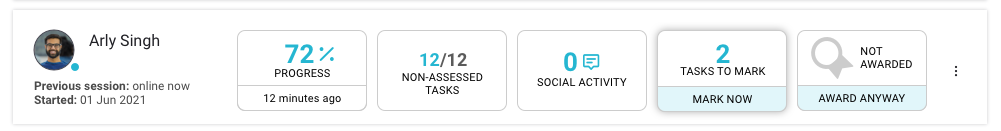

Floyd's engagement

Now let’s take a look at another learner, Floyd. Here we can see that Floyd has been logging in, and has made some progress. But he hasn’t been completing the practice tasks.

To “gain” the progress for a page, a learner has to complete all the tasks on that page. This is probably why Floyd’s progress is slightly lower than the rest of the class.

Kyle can reach out to Floyd to explain the importance of the practice tasks and encourage Floyd to give them a go.

Dala's engagement

Dala on the other hand, has been getting really involved in all areas of the course.

Kyle can send her a quick “keep up the amazing work email” to encourage her to stay engaged.

Looking for patterns

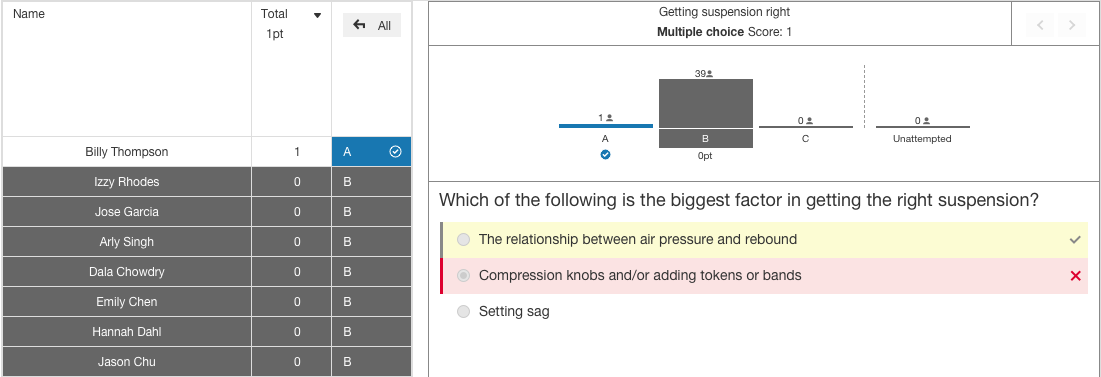

During the course of the practice tasks, Kyle wonders how the class, in general, is answering. He can take a look at the analysis feature for a task.

Here we can see the large majority of the class has answered this task incorrectly, choosing a common misconception. Since he’s spotted it, Kyle’s able to quickly alter and add to his teaching to explore this misconception with the class.

Looking for readiness

As the assessment due date begins to draw near, Kyle wants to make sure his learners are ready to give it their best. He cleverly included a practice task that leads to the assessment (this is also where he gives learners personalised formative feedback to help guide them ahead of their assessment).

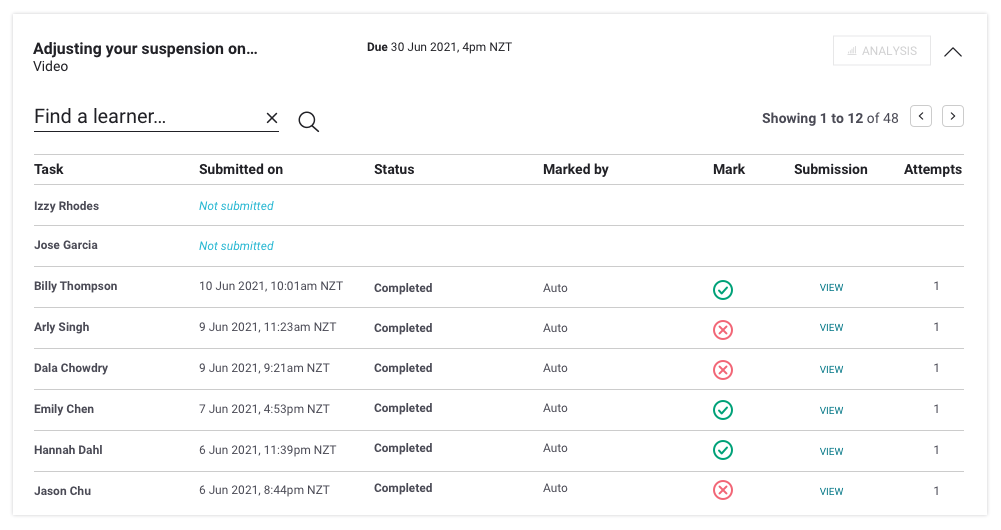

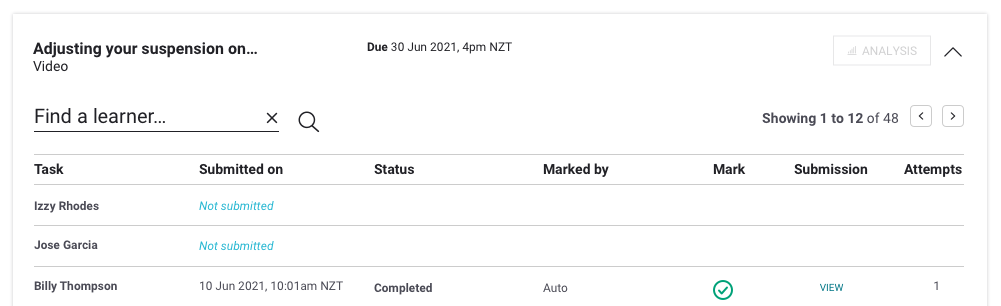

Kyle can look first at how learners are going with the automarked tasks.

Then, he can dive deeper into learner’s responses to the key practice task which leads to the assessment and can add in his personalised feedback to help each learner get to readiness.

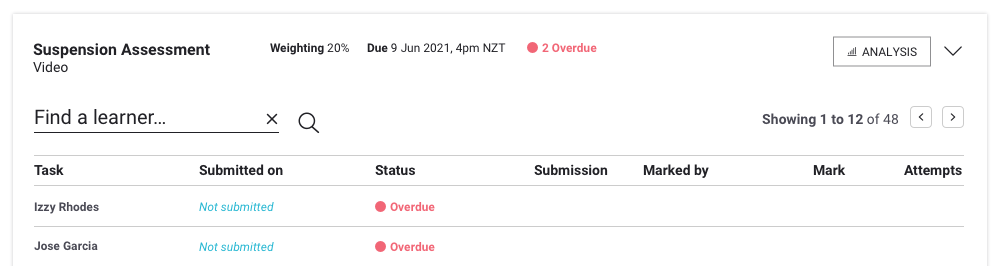

Kyle’s spotted that Izzy and Jose are yet to submit.

He can send them a quick reminder.

Looking for achievement

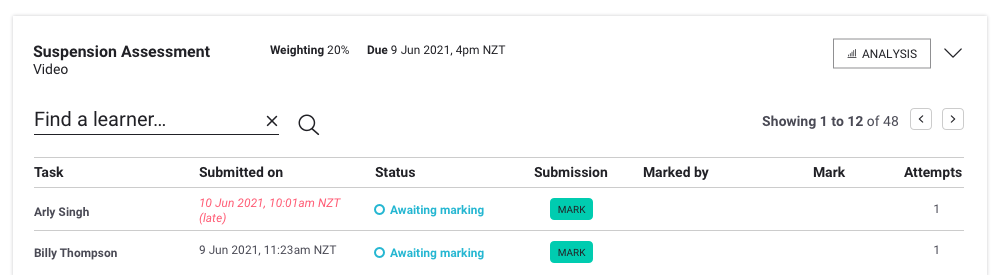

As learners submit their assessments, Kyle can easily spot what needs marking.

And he can see which learners haven’t submitted.

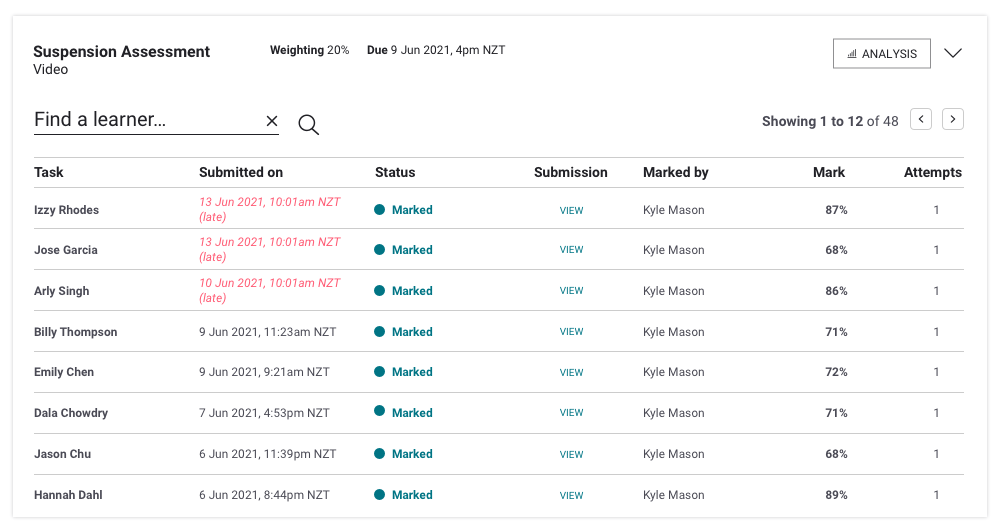

Once he’s marked the assessments, Kyle can see a clear picture of the results across the class.

Looking for reflection

The class has moved on to the next course, but Kyle’s keen to reflect on how the teaching and learning went so he can improve the course for next time. He heads to insights.

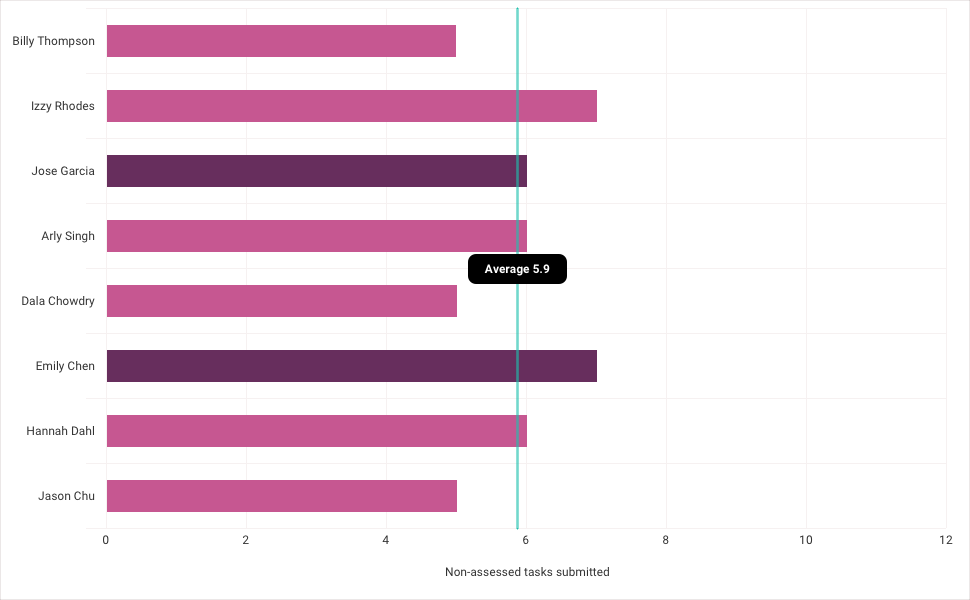

For example, looking at non-assessed tasks submitted (and knowing that there were a total of 12 non-assessed tasks) we can see that the majority of the class were not submitting all the non-assessed tasks. However, the large majority of the class still achieved in the assessment. So… this might prompt Kyle to rethink how many non-assessed tasks the course needs. And to analyse which of those tasks directly contribute to the outcomes and assessment.

Summary

Through Kyle’s story, we can see how the Class Console can support your facilitation interventions and direction throughout. From making sure learners are engaged right from the start, to encouraging contributions and completion, celebrating achievements and reflecting on challenges.

Of course, your story might be different from Kyle’s. But the data is there. Ready for you. It’s up to you how you use it.